This unit clarifies the terms that are normally thrown around with the term “artificial intelligence” and tend to confuse many people.

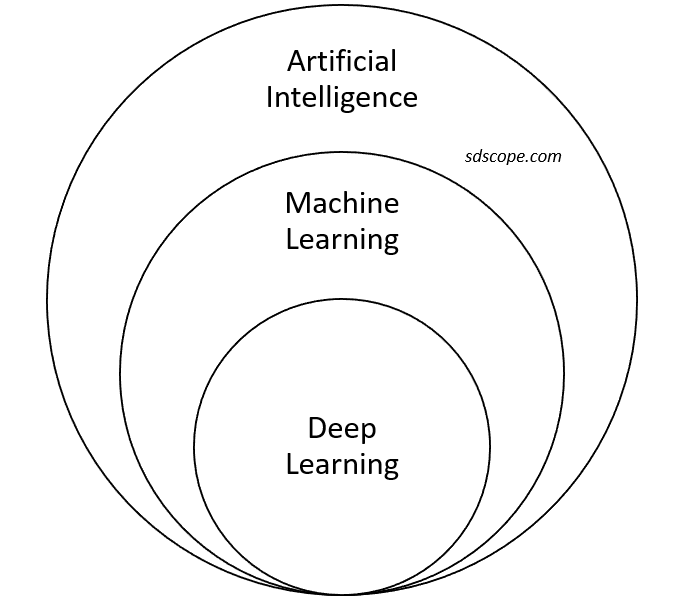

Artificial intelligence: an umbrella term that describes machines that simulate human behavior. It is a subfield of computer science. Key AI technologies are covered here.

Machine learning: the field of study that gives computers the ability to learn without being explicitly programmed (Arthur Samuel, 1959). It is a subfield of AI comprising dozens of techniques including Naive Bayes, regression, deep learning, and support vector machines.

Deep learning: a subfield of machine learning that uses interconnected layers of artificial neurons to process vast amounts of data and learn complex features of the data. Examples of methods are convolutional neural networks, recurrent neural networks, long short-term memory, and deep belief networks.

Data science: a set of methods and tools for discovering relationships in, and extracting patterns from, data and reporting the results. Examples of methods are statistics, machine learning, and software engineering.

Machine learning vs data science: the output of machine learning is an application, whereas that of a data science project is a slide deck or report for use in decision making

Business intelligence (BI): a set of processes and technologies for extracting knowledge from past data to find new opportunities and guide strategy

Machine learning vs BI: BI answers questions about the past, e.g., “What happened?”, “How many were sold?”, “When did the component fail?”, etc. Machine learning uses data about the past to make predictions on new data, e.g., “What will likely happen?”, “How many are likely to be sold?”, “When will the component likely fail?”, etc.

Important Notes

- While deep learning is certainly one of the most exciting areas in AI today, most enterprise problems can be solved quite well using simpler methods such as traditional regression, decision tree-based models and support vector machines.

- Deep learning is most suitable for complex problems such as image recognition, speech recognition and natural language processing, and requires massive data, massive computing power, and a lot of patience.